Creative Insights

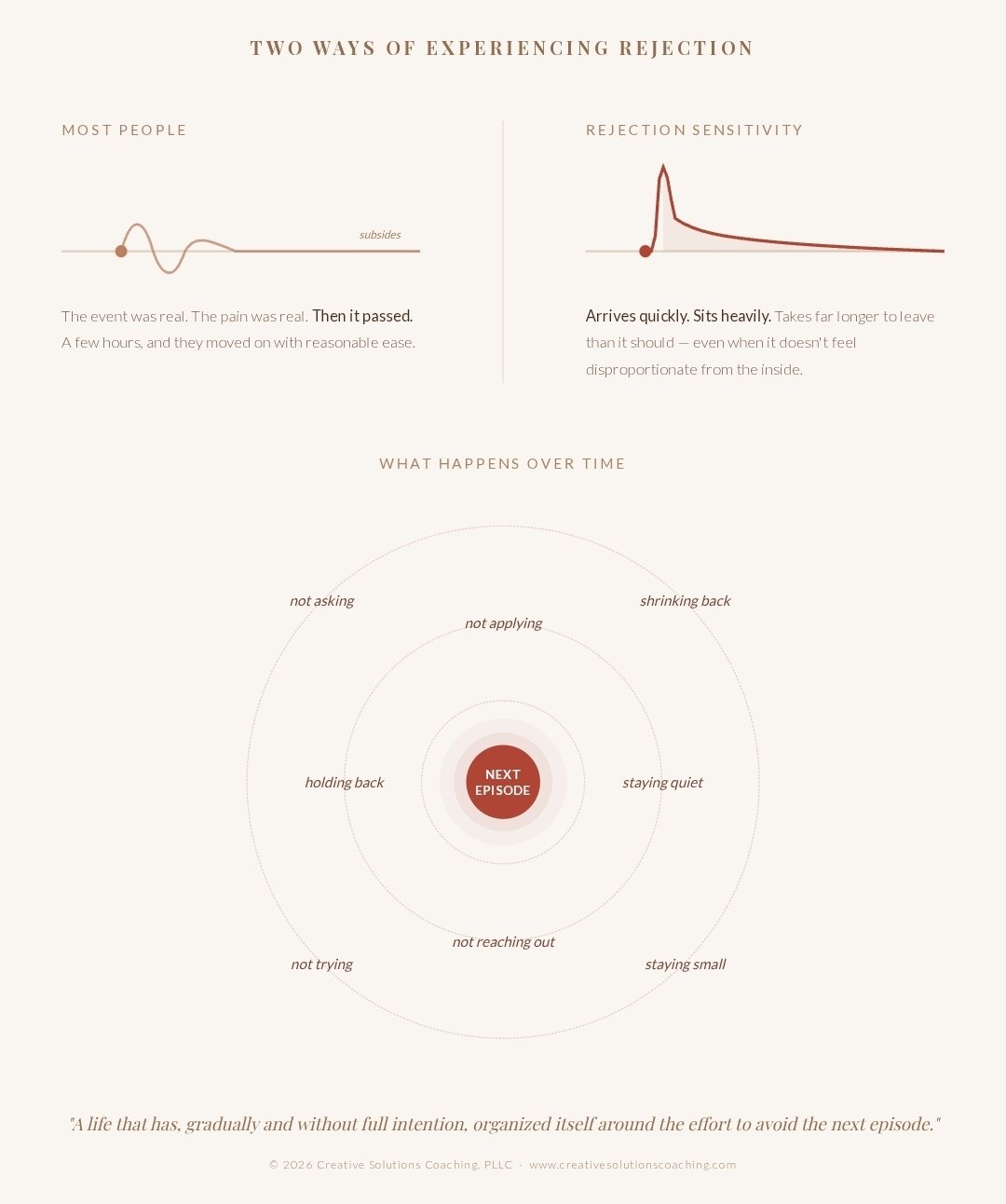

Rejection Sensitivity

The science of rejection sensitivity and why certain nervous systems register social pain with an intensity that can stop the day cold.

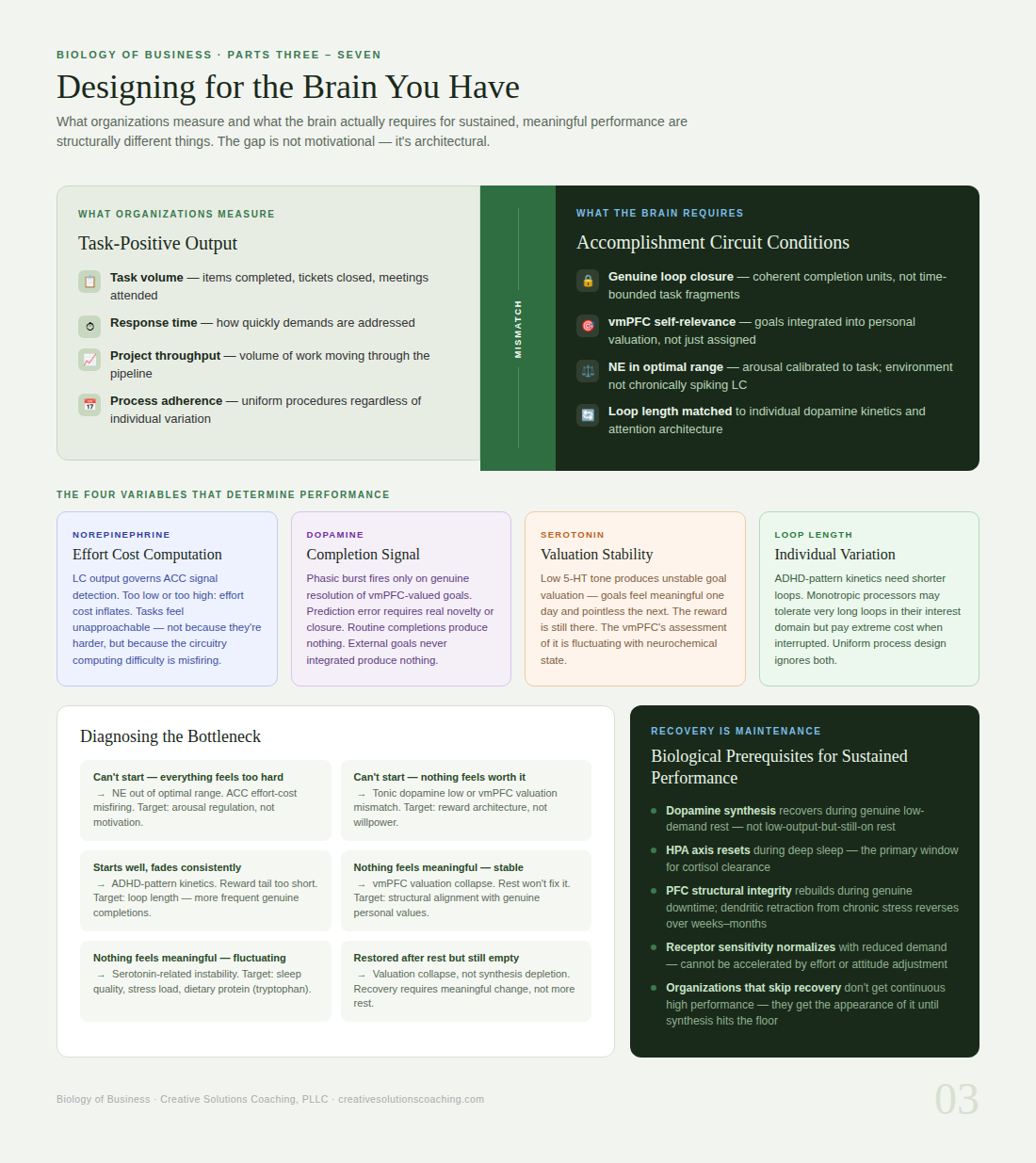

The Neuroscience of Accomplishment vs. Productivity

Why your brain tracks whether something mattered, not how much you did.

Most productivity frameworks get this wrong: the brain does not measure output. It does not tally tasks completed, hours logged, or items checked off a list. What the brain measures is whether something resolved -- whether a loop closed, whether the effort had a point.

That distinction is not semantic. It is neurological. And understanding it changes how you think about performance, motivation, burnout, and the design of work itself.

Two systems that most people treat as one -- productivity and accomplishment -- share some circuitry, but they are not the same thing, they do not produce the same neurochemical response, and optimizing for one can actively undermine the other.

What follows is how these systems work, what drives them, and what happens when the environment is built around the wrong one.

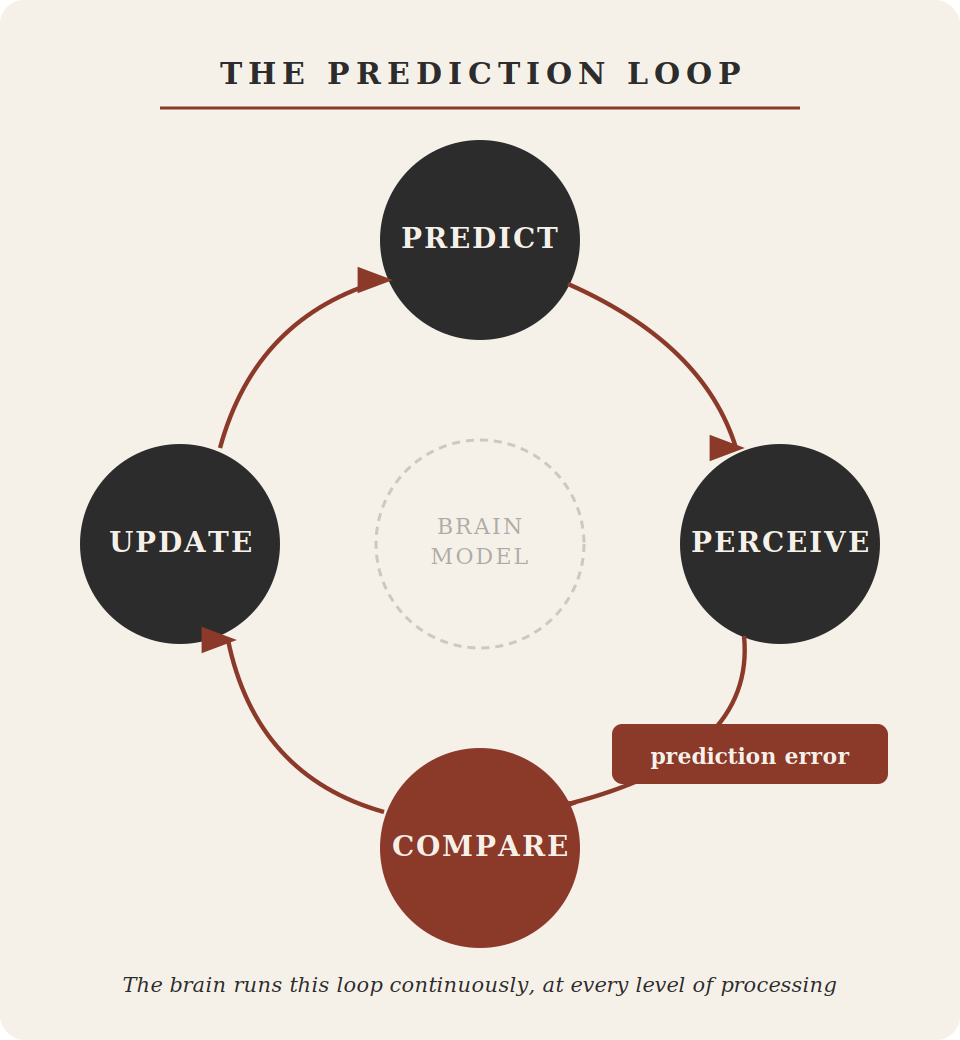

The Prediction Machine

Before a word reaches conscious awareness, before a face resolves into recognition, before a decision presents itself as a choice, the brain has already generated a prediction about what is coming. It does this continuously, automatically, and at every level of processing — from the raw assembly of sensory data into perception, all the way up to the high-level expectations that shape how a leader reads a room or how a team interprets a change in strategy.

Building a Life That Does Not Require Burnout to Function

After burnout, most people spend months or years in recovery. They rest. They reduce demands. They slowly rebuild capacity. And then they face a choice: do they return to the life that caused burnout, hoping they can manage it better this time? Or do they build something different?

Many people choose the first option because it feels easier. The systems were already in place. The routines were established. Going back seems simpler than starting over. But going back recreates the conditions that caused collapse. Within months — sometimes weeks — the cycle restarts.

The way to break this pattern is not managing the old life better. It is building a new life that fits the nervous system you actually have. This is not about lowering expectations or giving up on goals. This is about designing systems that work with your neurology instead of against it.

Workplace Survival for Autistic Adults

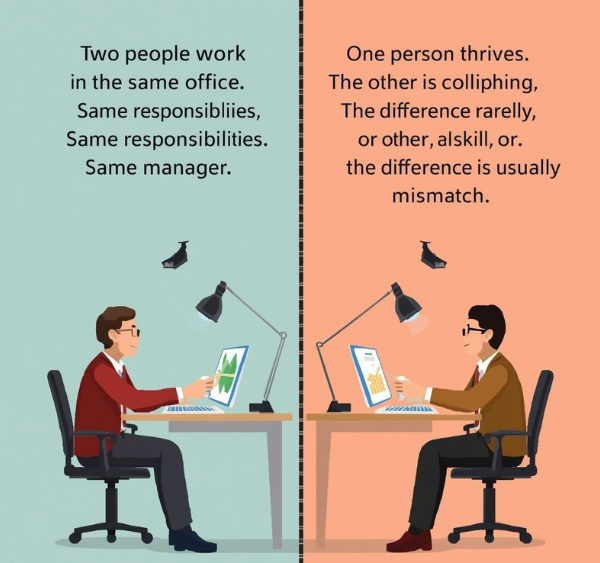

Two people work in the same office. Same role. Same responsibilities. Same manager. One person thrives. The other is collapsing. The difference is rarely effort, skill, or commitment. The difference is usually mismatch.

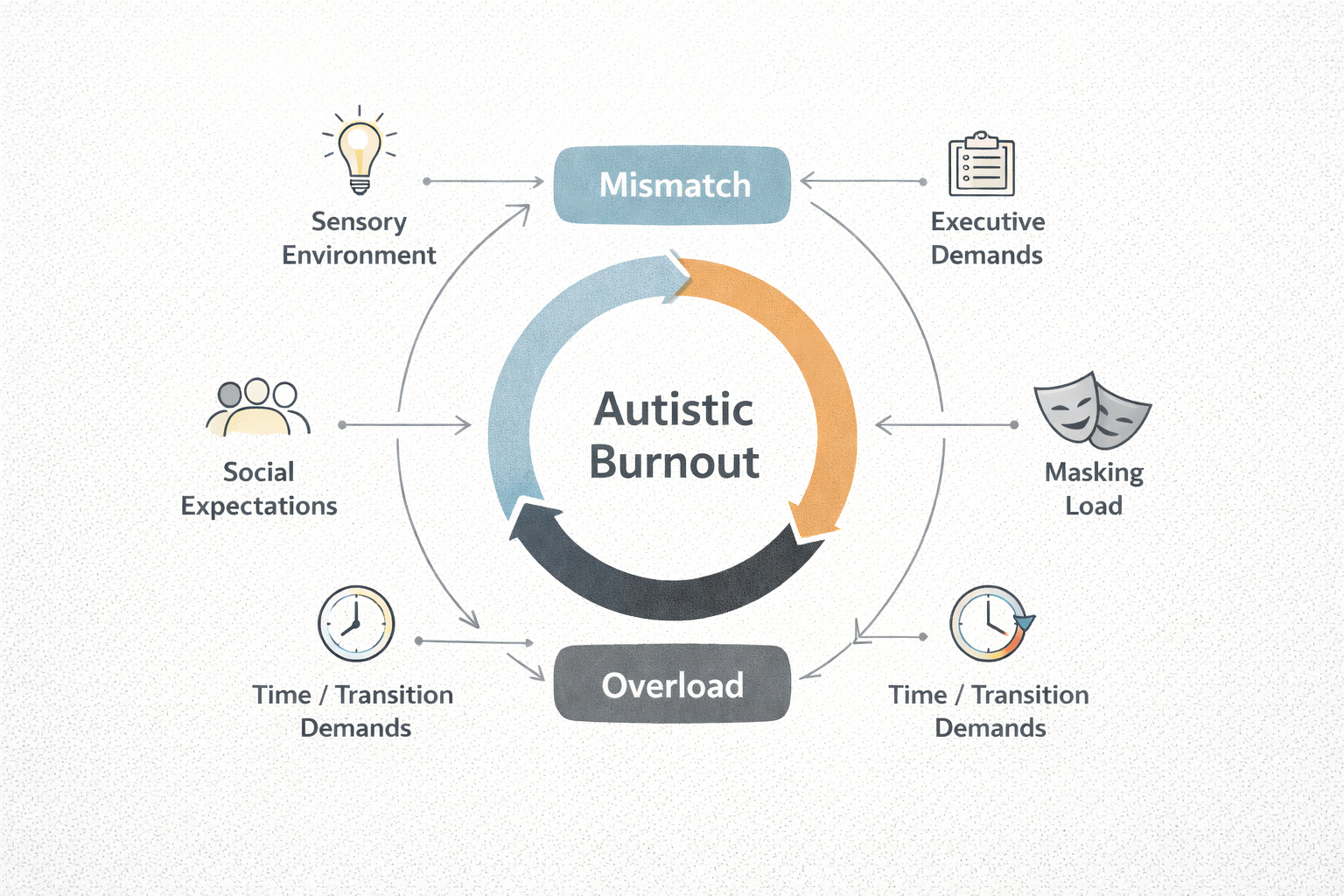

For autistic adults, workplaces are often designed in ways that create chronic overload. Open office plans. Constant interruptions. Unclear expectations. Rapid task-switching. Social demands layered on top of technical work. Fluorescent lighting. Background noise. Meetings that could have been emails. None of these are inherently problems. They become problems when they exceed what a nervous system can sustainably handle.

For neurotypical employees, these conditions may be annoying but manageable. For autistic employees, they can be the difference between functioning and burnout. This is not about being less capable. This is about environments that do not match neurological needs.

Burnout at work is not personal failure. It is environmental mismatch. When the environment changes, functioning can improve. When it cannot change, leaving is self-preservation — not defeat.

Recovering From Autistic Burnout: Structural Change, Not Just Rest

Recovery from autistic burnout is not about getting back to normal. It is about building a new normal that matches capacity rather than overriding it. This takes time. It takes support. It takes structural change. And it takes releasing the belief that struggling means failing.

First: Reduce & Rest

Lower demands to absolute minimum. This is triage, not failure.

Then: Grieve & Rebuild

Do both pathways simultaneously — environmental and narrative.

Always: Change the Conditions

Returning to what caused burnout recreates it. Structural change is permanent.

The Three Stages: How Burnout Happens and Where to Intervene

The hardest thing about burnout is that it's most treatable before it looks like burnout. Stage One — chronic mismatch — can persist for years while functioning appears intact. Stage Two strips away sleep, sensory tolerance, and executive function one layer at a time. By Stage Three, the nervous system enforces rest whether the person wants it or not. This article maps all three stages, what each one feels like from the inside, and where intervention is still possible.

What Autistic Burnout Actually Is

You wake up exhausted. You slept. You rested. You cancelled things, said no, took the weekend. And still, something that used to be easy is not easy anymore. You sit with the task. You know how to do it. You have done it a hundred times. And your brain will not move.

This is not laziness. This is not a bad week. This is what happens when a nervous system has been asked to give more than it has, for longer than it should, without enough time to come back.

Autistic burnout does not arrive suddenly. It accumulates. And it does not leave with rest alone, because rest does not change the thing that caused it. The job is still there. The noise is still there. The performance of being fine is still there. And so the depletion continues, quietly, until it cannot be managed anymore.

This article is about what burnout actually is, why it is so often mistaken for something else, and what recovery actually requires.